目录

- 💛负载均衡和故障转移原理

- 💛故障转移

- 案例需求:

- 0. 准备

- 1. 在/opt/modules/flume-1.7.0/job目录下创建group2文件夹

- 2. 编辑文件

- 3. 执行配置文件

- 案例需求:

- 0. 前面的步骤0搞过,这里就不用搞了

- 1. 拷贝一份group2

- 2. 编辑文件

- 3. 执行配置文件

💛负载均衡和故障转移原理

flume 定义 组成架构 内部原理链接描述

💛故障转移

案例需求:

使用 Flume1 监控一个端口,其 sink 组中的 sink 分别对接 Flume2 和 Flume3,采用FailoverSinkProcessor,实现故障转移的功能。

0. 准备

Flume要想将数据输出到HDFS,必须持有Hadoop相关jar包,就类似通过java写数据到mysql,就需要jdbc

commons-configuration-1.6.jar、

hadoop-auth-2.7.2.jar、

hadoop-common-2.7.2.jar、

hadoop-hdfs-2.7.2.jar、

commons-io-2.4.jar、

htrace-core-3.1.0-incubating.jar

#注:如果hadoop的版本不一样,请更换这些jar包,方法自行百度

将上述jar包,拷贝到/opt/modules/flume-1.7.0/lib文件夹下。

私人下载地址

链接:https://caiyun.139.com/m/i?185CkuBAdN6dp

提取码:xwAr

复制内容打开和彩云手机APP,操作更方便哦

1. 在/opt/modules/flume-1.7.0/job目录下创建group2文件夹

job文件夹的由来

mkdir group2

cd group2

touch flume1.conf

touch flume2.conf

touch flume3.conf

2. 编辑文件

编辑文件flume1.conf

# Name the components on this agent

a1.sources = r1

a1.channels = c1

a1.sinkgroups = g1

a1.sinks = k1 k2

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

a1.sinkgroups.g1.processor.type = failover

a1.sinkgroups.g1.processor.priority.k1 = 5

a1.sinkgroups.g1.processor.priority.k2 = 10

a1.sinkgroups.g1.processor.maxpenalty = 10000

# Describe the sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = hadoop201

a1.sinks.k1.port = 4141

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = hadoop201

a1.sinks.k2.port = 4142

# Describe the channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinkgroups.g1.sinks = k1 k2

a1.sinks.k1.channel = c1

a1.sinks.k2.channel = c1

编辑文件flume2.conf

# Name the components on this agent

a2.sources = r1

a2.sinks = k1

a2.channels = c1

# Describe/configure the source

a2.sources.r1.type = avro

a2.sources.r1.bind = hadoop201

a2.sources.r1.port = 4141

# Describe the sink

a2.sinks.k1.type = logger

# Describe the channel

a2.channels.c1.type = memory

a2.channels.c1.capacity = 1000

a2.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a2.sources.r1.channels = c1

a2.sinks.k1.channel = c1

编辑文件flume3.conf

# Name the components on this agent

a3.sources = r1

a3.sinks = k1

a3.channels = c2

# Describe/configure the source

a3.sources.r1.type = avro

a3.sources.r1.bind = hadoop201

a3.sources.r1.port = 4142

# Describe the sink

a3.sinks.k1.type = logger

# Describe the channel

a3.channels.c2.type = memory

a3.channels.c2.capacity = 1000

a3.channels.c2.transactionCapacity = 100

# Bind the source and sink to the channel

a3.sources.r1.channels = c2

a3.sinks.k1.channel = c2

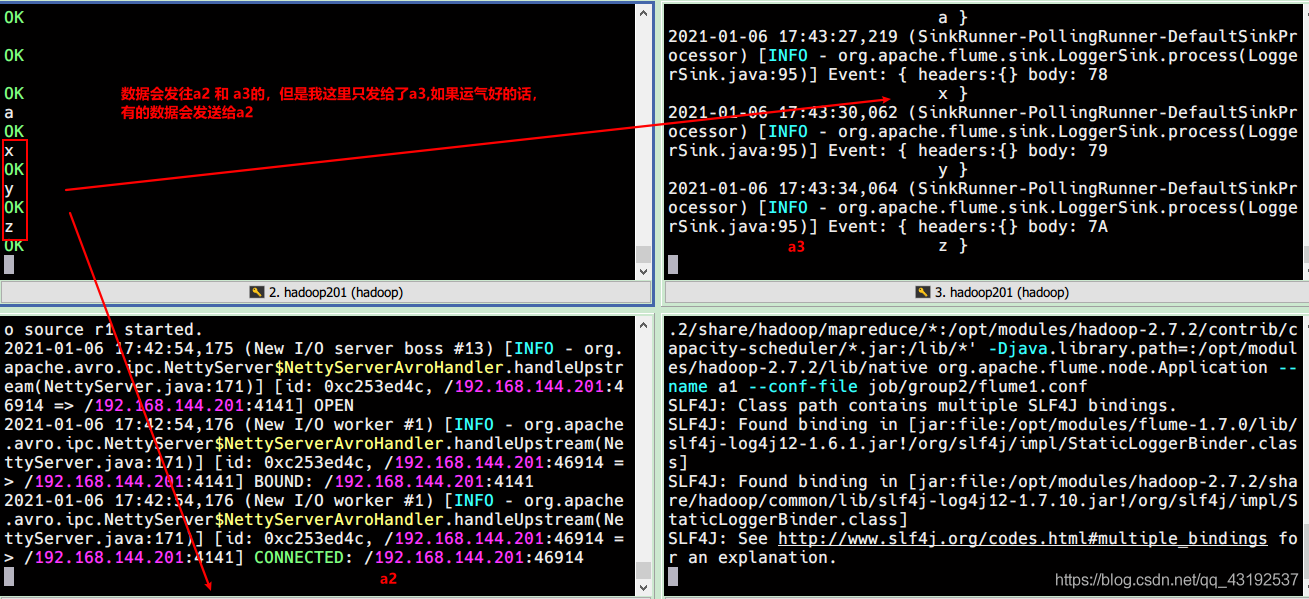

3. 执行配置文件

开4个窗口,分别输入以下命令

bin/flume-ng agent --conf conf/ --name a3 --conf-file job/group2/flume3.conf -Dflume.root.logger=INFO,console

bin/flume-ng agent --conf conf/ --name a2 --conf-file job/group2/flume2.conf -Dflume.root.logger=INFO,console

bin/flume-ng agent --conf conf/ --name a1 --conf-file job/group2/flume1.conf

nc localhost 44444

💛负载均衡

案例需求:

使用 Flume1 监控一个端口,其 sink 组中的 sink 分别对接 Flume2 和 Flume3,采用load_balancer,实现负载均衡的功能。

0. 前面的步骤0搞过,这里就不用搞了

1. 拷贝一份group2

2. 编辑文件

这次只编辑flume1.conf

# Name the components on this agent

a1.sources = r1

a1.channels = c1

a1.sinkgroups = g1

a1.sinks = k1 k2

# Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = 44444

#修改处

al.sinkgroups.gl.processor.type = load_balance

al.sinkgroups.g1.processor.backoff = true

al.sinkgroups.gl.processor.selector = random

# Describe the sink

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = hadoop201

a1.sinks.k1.port = 4141

a1.sinks.k2.type = avro

a1.sinks.k2.hostname = hadoop201

a1.sinks.k2.port = 4142

# Describe the channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinkgroups.g1.sinks = k1 k2

a1.sinks.k1.channel = c1

a1.sinks.k2.channel = c1

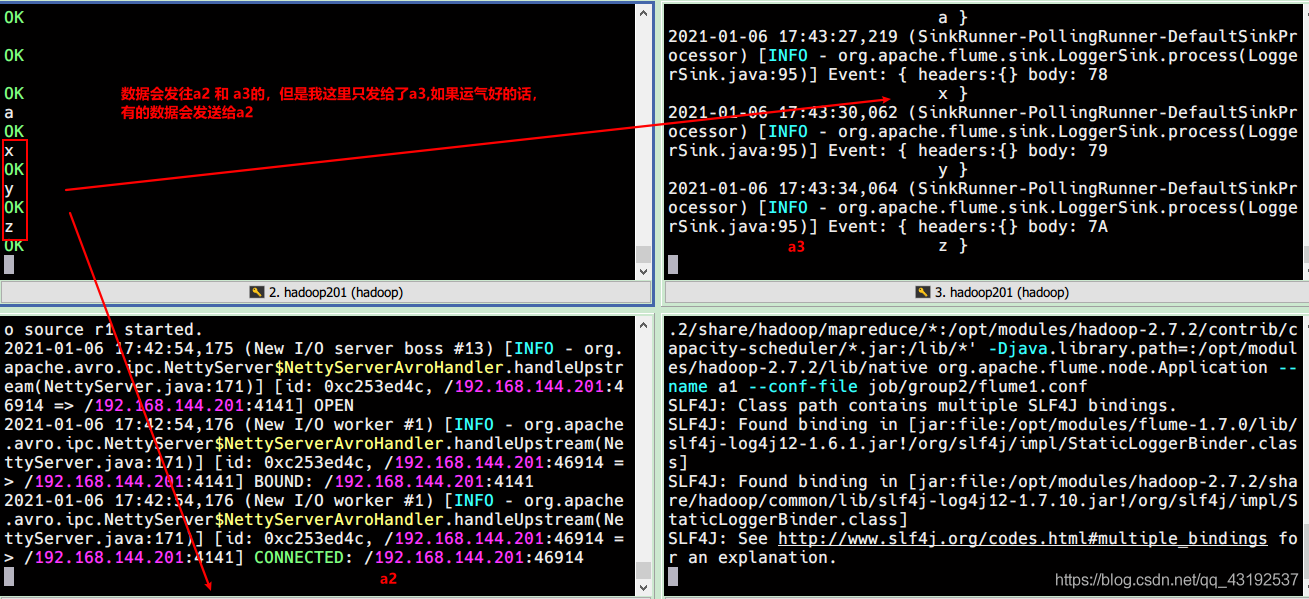

3. 执行配置文件

开4个窗口,分别输入以下命令

bin/flume-ng agent --conf conf/ --name a3 --conf-file job/group3/flume3.conf -Dflume.root.logger=INFO,console

bin/flume-ng agent --conf conf/ --name a2 --conf-file job/group3/flume2.conf -Dflume.root.logger=INFO,console

bin/flume-ng agent --conf conf/ --name a1 --conf-file job/group3/flume1.conf

nc localhost 44444