前言

之前关于Kubernetes有写过文档

参考:Kubernetes入门进阶课程

本文针对操作系统以及软件的新版本补充使用二进制包部署集群

之前版本部署参考:

Kubernetes平台环境规划

操作系统及软件版本

软件 |

版本 |

Linux操作系统 |

CentOSStream9 |

Kubernetes |

1.28.4 |

Docker |

24.0.7 |

Etcd |

3.x |

Flannel |

0.10 |

Harbor |

2.9.1 |

ELK |

8.11.0 |

glusterfs |

10.4 |

服务器规划

角色 |

IP |

组件和服务 |

主机名 |

推荐配置 |

master01 |

192.168.3.21 |

kube-apiserver kube-controller-manager kube-scheduler etcd ELK glusterfs |

CentOSStream9K8SMaster01021 |

4C8G |

master02 |

192.168.3.22 |

kube-apiserver kube-controller-manager kube-scheduler etcd ELK glusterfs |

CentOSStream9K8SMaster02022 |

4C8G |

master03 |

192.168.3.23 |

kube-apiserver kube-controller-manager kube-scheduler etcd Harbor |

CentOSStream9K8SMaster03023 |

4C8G |

node01 |

192.168.3.121 |

kubelet kube-proxy docker flannel |

CentOSStream9K8SNode01121 |

4C8G |

node02 |

192.168.3.122 |

kubelet kube-proxy docker flannel |

CentOSStream9K8SNode02122 |

4C8G |

node03 |

192.168.3.123 |

kubelet kube-proxy docker flannel |

CentOSStream9K8SNode03123 |

4C8G |

node04 |

192.168.3.124 |

kubelet kube-proxy docker flannel |

CentOSStream9K8SNode04124 |

4C8G |

自签名SSL证书

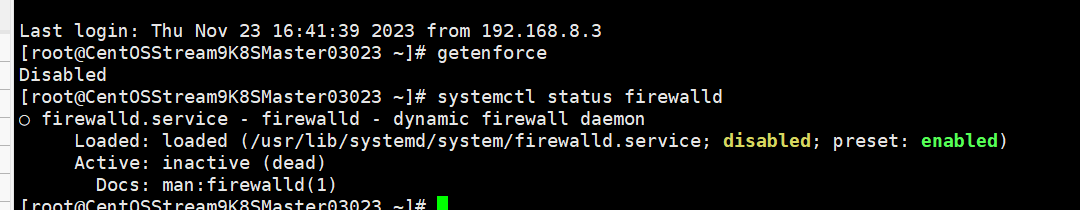

部署之前关闭防火墙和Selinux

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭selinux

setenforce 0

sed 's/enforcing/disabled/g' /etc/selinux/config -i关闭后确认

自签名SSL证书

组件 |

适用的证书 |

etcd |

ca.pem server.pem server-key.pem |

flannel |

ca.pem server.pem server-key.pem |

kube-apiserver |

ca.pem server.pem server-key.pem |

kubelet |

ca.pem ca-key.pem |

kube-proxy |

ca.pem kube-proxy.pem kube-proxy.pem |

kubeclt |

ca.pem admin.pem admin-key.pem |

Etcd数据库集群部署

下载cfssl工具

下载地址:

https://github.com/cloudflare/cfssl/releases 需要下载三个工具

cfssl

cfssljson

cfssl-certinfo根据操作系统下载对应版本,本次下载为Linux的AMD64构架版本,本次下载为截至目前为止最新版本1.6.4

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssl_1.6.4_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssljson_1.6.4_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssl-certinfo_1.6.4_linux_amd64github下载不稳定

设置权限和复制为系统命令

# 添加可执行权限

chmod +x cfssl_1.6.4_linux_amd64 cfssljson_1.6.4_linux_amd64 cfssl-certinfo_1.6.4_linux_amd64

# 复制为系统命令并重命名可以直接调用

cp cfssl_1.6.4_linux_amd64 /usr/local/bin/cfssl

cp cfssljson_1.6.4_linux_amd64 /usr/local/bin/cfssljson

cp cfssl-certinfo_1.6.4_linux_amd64 /usr/local/bin/cfssl-certinfo生产json文件

放置位置为/data/softs/k8s/Deploy/ssl/192.168.3.21/etcd-ssl

两个json配置文件,证书有效期设置为10年

ca-config.json

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOFca-csr.json

cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

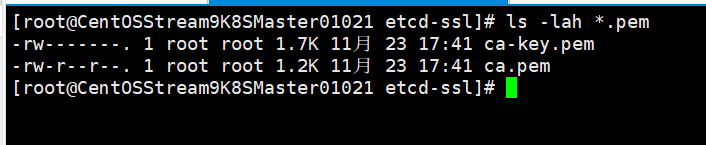

EOF生成ca证书

# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2023/11/23 17:41:05 [INFO] generating a new CA key and certificate from CSR

2023/11/23 17:41:05 [INFO] generate received request

2023/11/23 17:41:05 [INFO] received CSR

2023/11/23 17:41:05 [INFO] generating key: rsa-2048

2023/11/23 17:41:05 [INFO] encoded CSR

2023/11/23 17:41:05 [INFO] signed certificate with serial number 400770175114283344237417392918071586556385225070会在当前目录下生成以下两个证书

生成etcd域名证书,没有域名使用IP代替这里部署etcd的三台服务器的IP分别为192.168.3.21 192.168.3.22 192.168.3.23

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.3.21",

"192.168.3.22",

"192.168.3.23"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

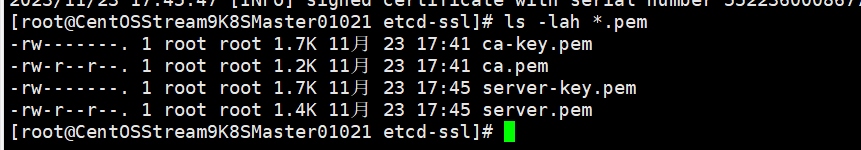

EOF生成证书

# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json|cfssljson -bare server

2023/11/23 17:45:46 [INFO] generate received request

2023/11/23 17:45:46 [INFO] received CSR

2023/11/23 17:45:46 [INFO] generating key: rsa-2048

2023/11/23 17:45:47 [INFO] encoded CSR

2023/11/23 17:45:47 [INFO] signed certificate with serial number 552236000867742058814244208498886652630551379102两次执行命令一个生成四个pem证书

部署etcd

以下操作下etcd的三个节点21 22 23均执行一遍,三个节点不同的地方是ip配置不同

下载二进制包,下载地址是:

https://github.com/etcd-io/etcd/releases

下载最新版本3.5.10

创建etcd执行文件配置文件及ssl证书目录

mkdir /opt/etcd/{bin,cfg,ssl} -p解压压缩包

tar -xf etcd-v3.5.10-linux-amd64.tar.gz复制可执行文件至对应目录

cp etcd-v3.5.10-linux-amd64/etcd etcd-v3.5.10-linux-amd64/etcdctl /opt/etcd/bin/创建etcd配置文件内容如下

# cat /opt/etcd/cfg/etcd

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.3.21:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.3.21:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.3.21:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.3.21:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.3.21:2380,etcd02=https://192.168.3.21:2380,etcd03=https://192.168.3.21:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"配置文件变量说明

ETCD_NAME 节点名称

ETCD_DATA_DIR 数据目录

ETCD_LISTEN_PEER_URLS 集群通信监听地址

ETCD_LISTEN_CLIENT_URLS 客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS 集群通告地址

ETCD_ADVERTISE_CLIENT_URLS 客户端通告地址

ETCD_INITIAL_CLUSTER 集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN 集群Token

ETCD_INITIAL_CLUSTER_STATE 加入集群的当前状态,new是新集群,existing表示加入已有集群创建system管理etcd文件

# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd

ExecStart=/opt/etcd/bin/etcd \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

#elias=rc-local.service3.5.10版本在设置systemctl的文件内容和3.3.10不一样,因为变量在配置文件/opt/etcd/cfg/etcd重复了,在3.5.10版本不允许重复所以删除变量的配置即可

排错参考:

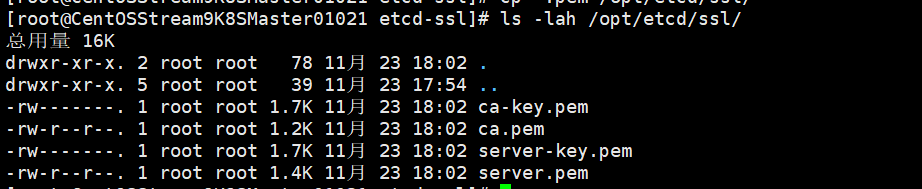

把上一步生成的pem证书拷贝至对应目录

cp *.pem /opt/etcd/ssl/

可以在第一个节点192.168.3.21配置好后使用scp命令把文件夹及文件拷贝至另外两台etcd服务器192.168.3.22 192.168.3.23

scp -r /opt/etcd/ root@192.168.3.22:/opt

scp -r /opt/etcd/ root@192.168.3.23:/opt

scp /usr/lib/systemd/system/etcd.service root@192.168.3.22:/usr/lib/systemd/system

scp /usr/lib/systemd/system/etcd.service root@192.168.3.23:/usr/lib/systemd/system192.168.3.22 192.168.3.23其他文件不变,只修改配置文件

192.168.3.22

# cat /opt/etcd/cfg/etcd

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.3.22:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.3.22:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.3.22:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.3.22:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.3.21:2380,etcd02=https://192.168.3.22:2380,etcd03=https://192.168.3.23:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"192.168.3.23

# cat /opt/etcd/cfg/etcd

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.3.23:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.3.23:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.3.23:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.3.23:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.3.21:2380,etcd02=https://192.168.3.22:2380,etcd03=https://192.168.3.23:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"部署完etcd启动并设置开机自启动,三台etcd主机都操作

systemctl daemon-reload

systemctl start etcd

systemctl enable etcd如果启动失败则检查配置文件是否正确,查看日志/var/log/message找原因

启动完成后检查etcd集群状态,因为是自建证书需要指定证书路径进行检查

# 进入证书文件夹

cd /opt/etcd/ssl

# 检查etcd集群状态,需要指定证书作为参数

/opt/etcd/bin/etcdctl --cert=server.pem --cacert=server.pem --key=server-key.pem --endpoints="https://192.168.3.21:2379,https://192.168.3.22:2379,https://192.168.3.23:2379" endpoint health注意:3.5.10的健康检查也和3.3.10不一样,参数不不一样对比3.3.10的健康检查命令如下

/opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.3.21:2379,https://192.168.3.22:2379,https://192.168.3.23:2379" cluster-health出现以下提示代码etcd集群正常

# /opt/etcd/bin/etcdctl --cert=server.pem --cacert=server.pem --key=server-key.pem --endpoints="https://192.168.3.21:2379,https://192.168.3.22:2379,https://192.168.3.23:2379" endpoint health

https://192.168.3.21:2379 is healthy: successfully committed proposal: took = 17.920374ms

https://192.168.3.22:2379 is healthy: successfully committed proposal: took = 22.152852ms

https://192.168.3.23:2379 is healthy: successfully committed proposal: took = 23.160891ms排错,如果日志出现提示

has already been bootstrapped删除etcd数据目录文件夹并新建再重启etcd

rm -rf /var/lib/etcd/

mkdir /var/lib/etcdNode安装Docker

在node节点安装Docker所有node都需要安装,本次有4台node主机

# step 1: 安装必要的一些系统工具

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

sudo yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3: 更新并安装Docker-CE

sudo yum makecache fast

sudo yum -y install docker-ce

# Step 4: 开启Docker服务

sudo service docker start

# Step 5:设置开机启动

systemctl enable docker安装完成查看docker版本

# docker --version

Docker version 24.0.7, build afdd53bFlannel容器集群网络部署

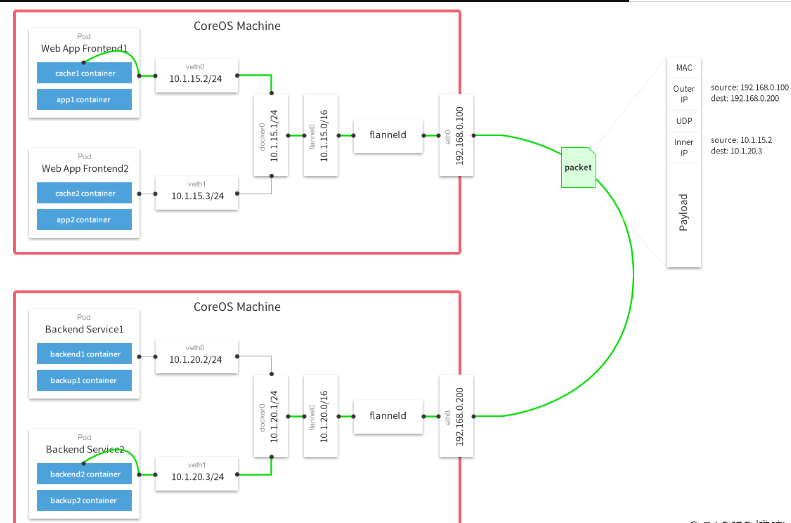

Kubernetes网络模型设计基本需求

- 一个pod一个IP

- 每个pod独立IP

- 所有容器都可以于其他容器通信

- 所有Node节点都可以于所有容器通信

flannel工作原理图

Overlay Network

覆盖网络,在基础网络上叠加一种虚拟网络技术模式,该网络中的主机通过虚拟链路连接起来。

Flannel是Overlay网络的一种,也是将源数据包封装在另一种网络包里进行路由转发和通信,目前支持UDP,VXLAN,Host-GW,AWS VPC和GCE路由等数据转发方式。

Falnnel要用etcd存储一个子网信息,所以要保证能成功连接etcd,写入预定义子网段

在master01 192.168.3.21上操作

# 进入证书目录

cd /opt/etcd/ssl

# 设置预定义子网段为172.17.0.0/16

/opt/etcd/bin/etcdctl --cert=server.pem --cacert=server.pem --key=server-key.pem --endpoints="https://192.168.3.21:2379,https://192.168.3.22:2379,https://192.168.3.23:2379" put /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'出现以下提示代表设置正常

OK把以上命令的put改成get就能获取到设置信息

# /opt/etcd/bin/etcdctl --cert=server.pem --cacert=server.pem --key=server-key.pem --endpoints="https://192.168.3.21:2379,https://192.168.3.22:2379,https://192.168.3.23:2379" get /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

/coreos.com/network/config

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

/coreos.com/network/subnets/172.17.7.0-24

{"PublicIP":"192.168.3.121","PublicIPv6":null,"BackendType":"vxlan","BackendData":{"VNI":1,"VtepMAC":"6e:8b:14:0f:1d:de"}}在所有node节点安装flannel重复以下步骤

本次下载安装目前最新版0.23.0

# 下载

wget https://github.com/flannel-io/flannel/releases/download/v0.23.0/flannel-v0.23.0-linux-amd64.tar.gz

# 解压

tar -xf flannel-v0.23.0-linux-amd64.tar.gz

# 创建Kubernetes目录

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

# 把解压后的可执行文件复制到对应目录

cp flanneld mk-docker-opts.sh /opt/kubernetes/bin/配置flannel配置文件

# cat /opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.3.21:2379,https://192.168.3.22:2379,https://192.168.3.23:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem"需要把etcd对应的证书拷贝到这个配置文件指定的目录/opt/etcd/ssl下

cp /data/softs/k8s/Deploy/ssl/192.168.3.21/etcd-ssl/*.pem /opt/etcd/ssl/配置system管理flanneld

# cat /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target启动flanneld以后可以查看文件/run/flannel/subnet.env查看子网信息

systemctl daemon-reload

systemctl restart flanneld

systemctl enable flanneld查看子网信息

# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.7.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.7.1/24 --ip-masq=false --mtu=1450"配置docker使用子网信息,修改docker的systemctl管理文件

# cat /usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target重启docker和flanneld

systemctl daemon-reload

systemctl restart docker

systemctl restart flanneld检查是否生效

# ps aux|grep docker

root 6508 0.0 1.1 1468712 90588 ? Ssl 17:43 0:00 /usr/bin/dockerd --bip=172.17.7.1/24 --ip-masq=false --mtu=1450

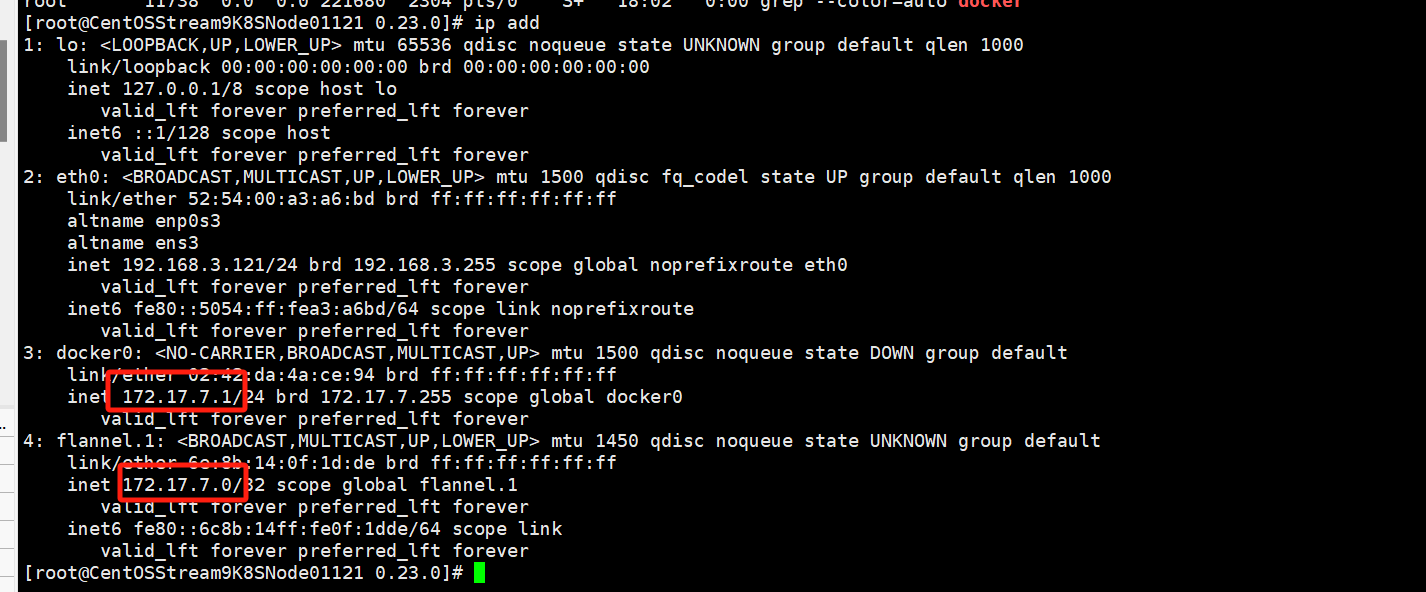

root 11738 0.0 0.0 221680 2304 pts/0 S+ 18:02 0:00 grep --color=auto docker使用ip addr命令可以看到flannel和docker0网卡在同一网段

# ip add

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 52:54:00:a3:a6:bd brd ff:ff:ff:ff:ff:ff

altname enp0s3

altname ens3

inet 192.168.3.121/24 brd 192.168.3.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fea3:a6bd/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:da:4a:ce:94 brd ff:ff:ff:ff:ff:ff

inet 172.17.7.1/24 brd 172.17.7.255 scope global docker0

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether 6e:8b:14:0f:1d:de brd ff:ff:ff:ff:ff:ff

inet 172.17.7.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::6c8b:14ff:fe0f:1dde/64 scope link

valid_lft forever preferred_lft forever

同样的操作在其他node执行一遍

测试不同节点互通